White Paper

Survival of the Fastest: A Practical Framework for Risk-Based Data Management

Executive Summary:

- RBDM can be implemented independently. CDM teams should modernize data workflows without waiting for enterprise-wide RBQM maturity.

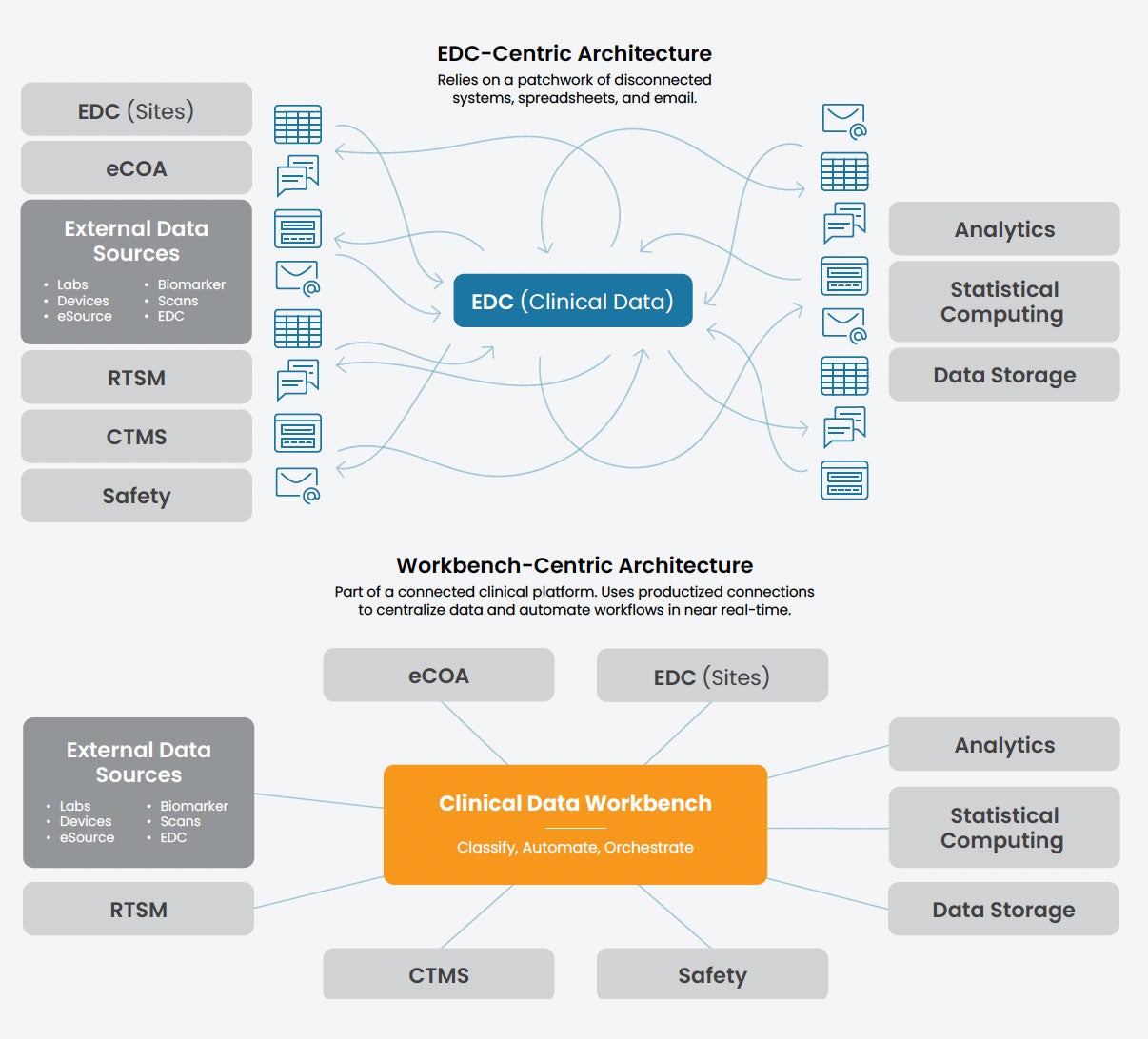

- Transitioning to a clinical data workbench replaces legacy EDC-centric bottlenecks with a centralized, automated command center for all data.

- Focusing on “errors that matter” through classification and automation significantly improves operational efficiency and clinical data integrity.

I. Introduction: Breaking implementation paralysis

For over a decade, regulatory bodies have signaled a clear shift toward risk-based approaches in clinical trials. The promise for clinical data teams is revolutionary: leaner operations, faster timelines, and a laser focus on the data that actually matters. Yet, walk into almost any clinical data management (CDM) department today, and you’ll find a landscape still dominated by legacy check-everything processes.

The gap between traditional methods and modern reality is reaching a breaking point. An unprecedented surge in data volume—driven by electronic clinical outcome assessment (eCOA), specialized labs, wearables, etc.—means that 100% manual review is now an operational bottleneck. In today’s high-velocity environment, exhaustive review doesn’t guarantee quality, and may do more harm than good. A recent survey found that 69% of data managers and clinical research associates (CRAs) worry that data quality suffers as a result of inefficient cleaning and review.

The paralysis of “wait-and-see”

ICH E6(R3) establishes risk-based quality management (RBQM) as a standard requirement; at the same time, it has locked many CDM departments into a state of implementation paralysis.

There is a tendency to wait for a unified, enterprise-wide RBQM solution before evolving data management practices. This wait-and-see approach often stems from a belief that risk-based data management (RBDM) cannot exist until a mature RBQM framework is fully operational.

Meanwhile, the pressure is mounting. Beyond regulatory shifts, there is a relentless internal drive to improve efficiency, reduce costs, and outperform competitors. In this environment, RBDM is no longer a nice-to-have for the future; it is a survival requirement for the present.

Empowering CDM to lead

Three factors have historically hindered progress in implementing RBDM:

Strategic ambiguity

Lack of clarity on how RBDM functions as a standalone discipline within a larger RBQM initiative.

Technical constraints

Reliance on legacy electronic data capture (EDC)-centric architectures that are not designed to support targeted, risk-based workflows.

Change management gaps

Lack of organizational commitment to modernize legacy processes, reskill teams, and redefine roles.

RBDM and RBQM are fundamentally linked, yet distinct. This means that CDM teams can—and should—implement RBDM independently. CDM should not wait for a total organizational overhaul to begin modernizing. Just as clinical operations has modernized its workflows through the adoption of risk-based monitoring, CDM can advance its functional evolution by establishing RBDM as a data-specific contribution to the study’s overall quality framework.

This paper provides a practical framework for executing RBDM independently from enterprise-wide RBQM initiatives. We will outline the core technology requirements and process shifts needed to establish a risk-based foundation today, allowing CDM to drive organizational efficiency by clarifying its own role in a risk-based future.

II. Synchronous independence: Defining the RBQM-RBDM relationship

The all-or-nothing approach to risk-based methodologies leads to stagnation. When the boundaries between RBQM and RBDM are blurred, CDM teams often over-scope their efforts—attempting to architect a comprehensive enterprise solution instead of modernizing specific data workflows. To make tangible progress, we must clarify how these concepts nest within one another.

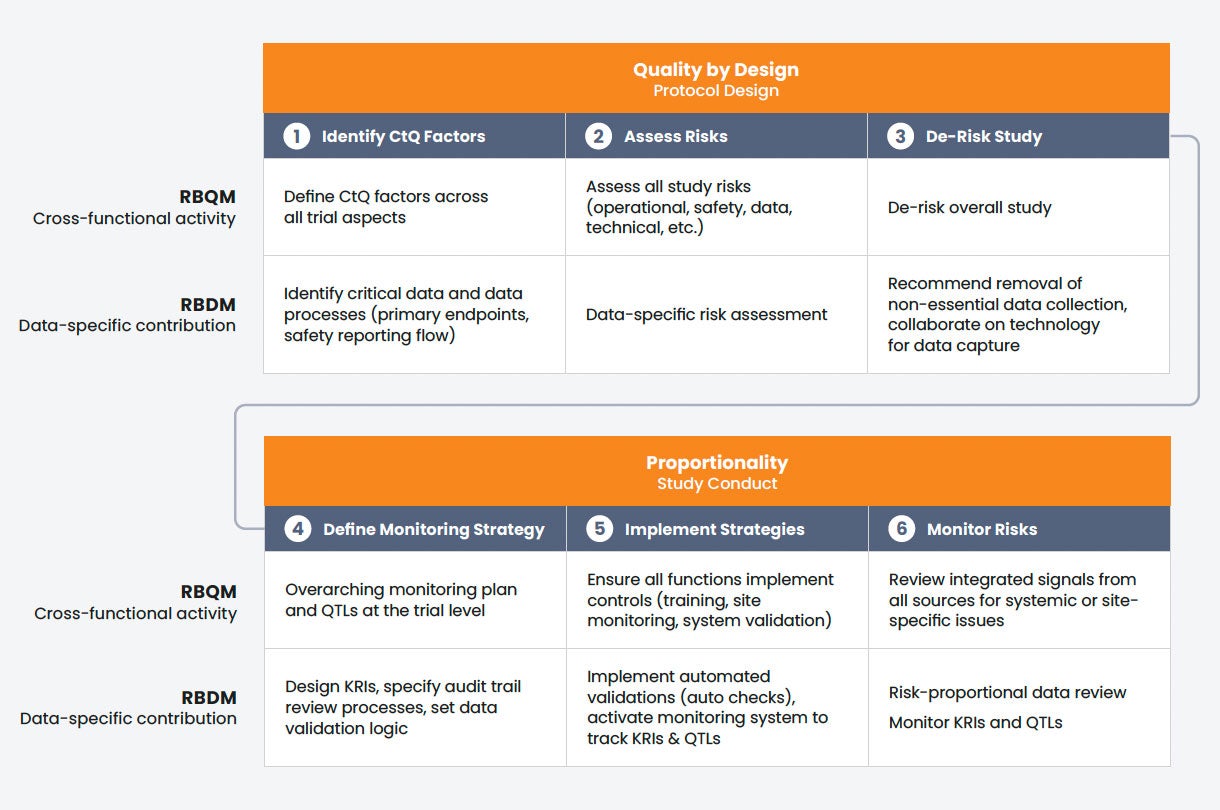

The RBQM framework: Quality by Design + proportionality

RBQM is the overarching discipline that governs the trial from concept to close-out. According to ICH E6(R3), it is defined by two fundamental principles that span the planning and execution phases of a trial:

Quality by Design (QbD)

THE PLANNING PHASE

QbD mandates a proactive shift from reactive error-catching to preventive design. By identifying Critical to Quality (CtQ) factors during protocol development, cross-functional teams work to remove unnecessary complexity and mitigate risks before the first patient is even enrolled.

Proportionality

THE EXECUTION PHASE

Proportionality ensures that trial activities are fit-for-purpose during the study. It mandates that resource allocation be proportionate to risk (higher risk gets more rigor) and proportionate to value (CtQ factors get more assurance), while minimizing burden by eliminating activities that do not enhance participant protection or critical data reliability.

Together, these principles ensure the right level of oversight is applied to the right areas at the right time across all functional disciplines.

RBDM: The data-specific engine

RBDM is simply the application of these two RBQM principles to the clinical data lifecycle (Table 1). It is one of several functional components—alongside clinical operations, safety, and supply—that make up the total RBQM program.

While the broader RBQM program addresses trial-wide risks—such as cold-chain breaks in Investigational Product (IP) handling or site-level informed consent issues—RBDM focuses specifically on the integrity of the evidence stream, ensuring the fidelity of the data most critical to the trial’s primary objectives, patient safety, and efficacy results.

RBDM planning (QbD)

During study design, CDM teams identify and assess risks specifically for critical data points and data-processing workflows. This ensures the study is built to capture the data that matters with the right quality controls from the start.

RBDM execution (Proportionality)

During the trial, CDM orchestrates risk-based data review. Rather than a blanket check-everything approach, effort is prioritized for data essential to trial outcomes and patient safety, with a focus on targeted review by scenario.

Beyond this targeted review, CDM collects data on key risk indicators (KRIs) and serves as an air traffic controller for many of the trial’s risk signals. By routing these signals to the appropriate functional teams–whether clinical operations, safety, or medical–CDM enables these teams to monitor KRIs against thresholds, and to respond as quickly as possible when a threshold is crossed.

The opportunity for CDM

The key for CDM leaders is recognizing that because RBDM is a functional component of RBQM, it can be activated within its own domain. CDM does not need to solve for every trial-wide risk factor simultaneously to begin modernizing. By securing the infrastructure to isolate CtQ data and establishing the communication loops for risk signals now, CDM provides the operational foundation that eventually supports the broader enterprise RBQM activation.

TABLE 1

RBDM vs. RBQM

RBDM applies the core principles of RBQM specifically to the clinical data lifecycle.

| Quality by Design Study Design Phase |

Proportionality Study Conduct |

|

|---|---|---|

| RBQM Cross-functional activity |

Define Critical to Quality factors and assess risks across all trial aspects | Define and execute overarching risk monitoring plan at the trial level |

| RBDM Data-specific contribution |

Identify and assess risks for critical data and data-related processes | Orchestrate cross-functional, risk-based data review |

III. The great tech shift: From EDC-centric to workbench-centric

To implement RBDM, we must first address a structural hurdle: the traditional, EDC-centric technology architecture. For decades, the EDC system has been the center of the universe for clinical data. While this model served the industry well during the era of paper-to-digital transformation, it is not designed to handle the modern explosion of data from digital sources.

The EDC bottleneck

Today, EDC is just one of a growing number of data sources, yet many CDM teams continue to treat it as the primary tool for data management. This mismatch creates several critical issues:

Inefficient workarounds

Teams are forced to rely on a patchwork of manual processes—spreadsheets, emails, and custom statistical analysis system (SAS) listings—to aggregate and clean non-EDC data (e.g., eCOA and central labs).

Fragmented visibility

When data is scattered across disconnected solutions, getting a holistic view of trial quality is nearly impossible.

A blocker to RBDM

Most directly to the point of this paper, this legacy architecture lacks the real-time integration and automation required for risk-based workflows. You cannot effectively monitor KRIs or orchestrate targeted reviews when your data is siloed in a tool designed for static reporting rather than actionable analysis.

In short: EDC as the go-to CDM tool has become a primary hurdle to risk-based evolution.

Defining the clinical data workbench

In response to the challenges outlined above, the industry is undergoing a fundamental shift. In this new reality, EDC is no longer the hub for CDM; it is a spoke for site-entered data. The real command center for the next generation of CDM is the clinical data workbench (Figure 1).

A clinical data workbench is a centralized environment–part of a unified clinical platform–where data from all sources is aggregated, transformed, and cleaned in near real-time. Unlike EDC systems, the workbench allows CDM and other stakeholders to see all trial data in one place, providing a single source of truth where data can be interrogated and actioned across different teams.

A workbench is more than a nice-to-have upgrade; it is the technical requirement for any successful RBDM or RBQM strategy. By moving the center of gravity from the EDC to the workbench, CDM teams gain the infrastructure necessary to move beyond manual data cleaning and start executing the core capabilities of RBDM.

FIGURE 1

EDC-centric vs. workbench-centric architectures

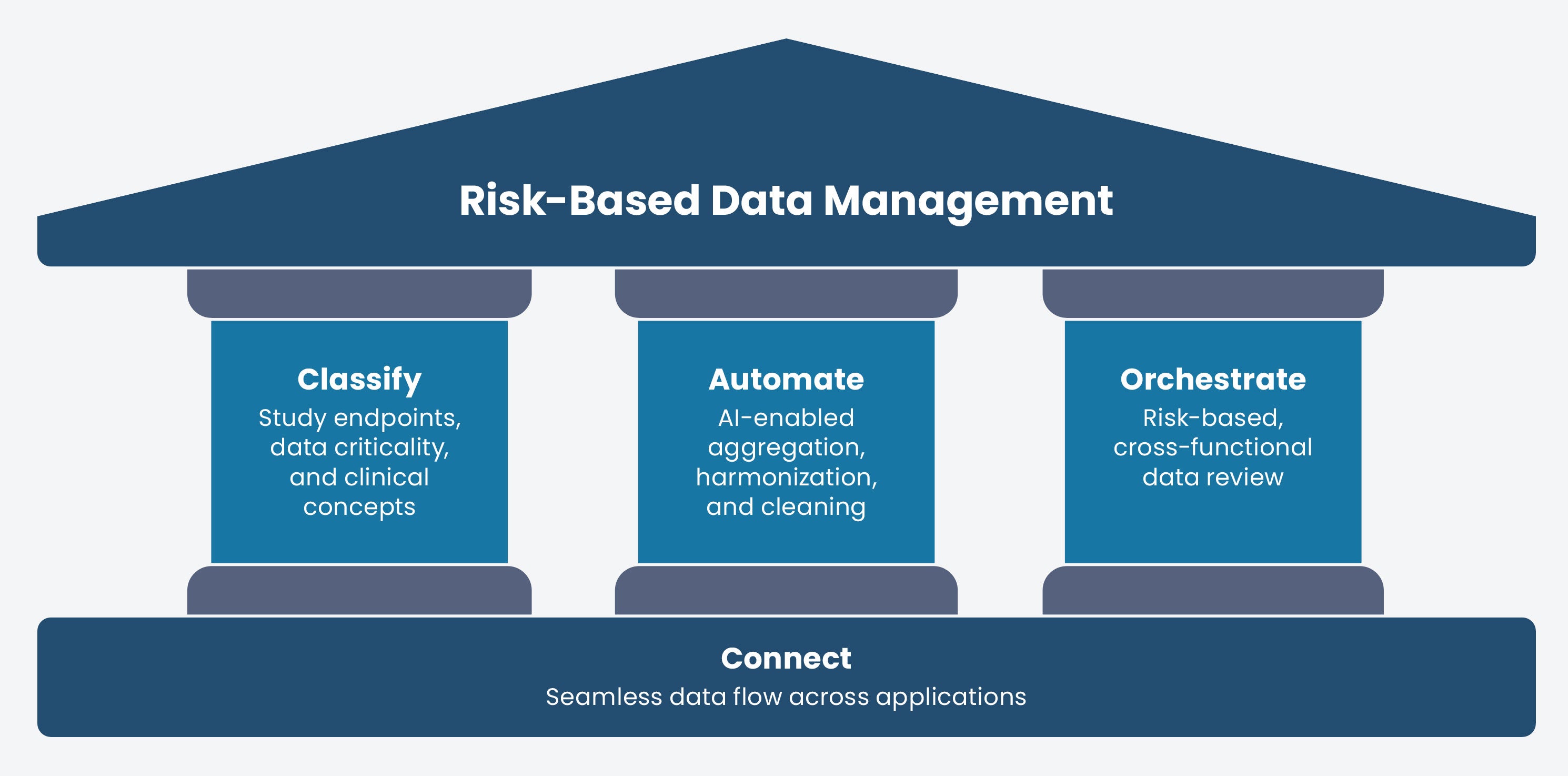

The pillars of actionable RBDM

As clinical data workbenches emerge as a product category, CDM leaders face a choice among tools that offer varying features. To move beyond implementation paralysis, organizations must evaluate workbench technology on its ability to serve as the functional engine for risk-based workflows. The following pillars define the core capability requirements for a workbench, ensuring it can execute RBDM independently today while remaining extensible enough to anchor a broader RBQM program tomorrow.

FIGURE 2

The pillars of actionable RBDM

| Pillar | Capability | Requirement |

|---|---|---|

| Classify | Identification of Critical to Quality (CtQ) factors | The workbench must recognize and track critical data, endpoints, and clinical concepts. This allows CDM to prioritize resources and oversight where they are needed most to protect trial outcomes and patient safety. |

| Automate | Automation of data flows and actions | The workbench must automate data flows and key actions across applications to reduce cycle times and free teams from rote tasks. This includes ingestion, harmonization, and cleaning, as well as external actions like protocol deviation detection or query generation. |

| Orchestrate | Cross-functional workflow management | The workbench must act as an orchestrator, providing medical, safety, and clinical operations teams with complete and current data. It facilitates immediate action through automated task assignment and escalation when risk thresholds are crossed. |

| Connect | Purpose-built platform | Effective RBDM requires a natively-integrated foundation, rather than bolted-on point solutions, to ensure the structural integrity needed to scale automation and orchestration across the clinical ecosystem. |

IV. Three phases to implementation

The classify, automate, and orchestrate pillars of RBDM can be implemented across three phases.

| 1. Prepare Focus on process and operations |

2. Implement Deploy the workbench |

3. Optimize Activate intelligence |

|---|---|---|

| Audit historical data to identify noise queries, standardize global libraries, and identify critical data points to transition from “zero errors” to “zero errors that matter.” | Establish a central workbench to automate repetitive tasks (ingestion, reconciliation, and queries) while replacing manual trackers with real-time, cross-functional dashboards. | Introduce integrated classification so the system understands data intent, evolving from basic rules to an autonomous central nervous system that scales automation. |

Phase 1: Prepare

Even without a workbench, teams can begin to execute the Classify pillar (Figure 2) by focusing on foundational operational processes.

Audit query effectiveness

The goal here is to prove that more checking doesn’t equal better data. By analyzing historical query data, CDM can identify where manual effort is wasted on noise.

ACTION

Conduct an impact-to-effort audit. Analyze your last three study locks. What percentage of queries actually resulted in a change to a critical value versus a cosmetic fix (e.g., “Please clarify” on a non-endpoint field)? Use this data to justify the cessation of certain manual checks.

Reinforce global standards

Standardizing isn’t just about CDISC compliance; it’s about making data automation-ready. If every study uses a different naming convention for Weight variables or Lab Codes, a workbench cannot automatically aggregate and run risk-based KRIs across your portfolio.

ACTION

Tighten your global library. Ensure that critical data points (Tier 1) use identical structures across all protocols. Standardize the metadata (tags) for clinical concepts so that an automated system can eventually recognize an Adverse Event or Primary Endpoint regardless of the source.

Identify critical data and data

Even without a formal RBQM plan, CDM can host a Data Criticality Workshop with clinical operations and statistics teams to map the protocol’s primary and secondary endpoints to specific data points.

ACTION

Create a data criticality matrix. Identify which data points directly support efficacy and safety, and designate them as “Tier 1” to ensure they receive prioritized oversight regardless of the broader trial status.

Assess data-specific risk

CDM is uniquely positioned to lead a data vulnerability assessment. While RBQM looks at site or patient risks, CDM can partner with other teams to assess the journey of the data.

ACTION

Conduct a data flow risk review. Partner with clinical operations to identify where manual data hand-offs or complex third-party integrations (e.g., specialized labs) could introduce errors or delays in the evidence stream.

De-risk the study

This is where CDM and biostatistics engage in high-value collaboration to streamline the data collection strategy. By analyzing the data volume against the protocol, both teams can highlight “noise”—data being collected that does not support an endpoint or safety requirement.

ACTION

Perform a protocol complexity audit. Recommend the removal of non-essential data fields that increase site burden without adding value to the final analysis, effectively de-risking the study through simplification.

Define data quality KRIs

A complete set of KRIs and quality tolerance limits (QTLs) require a holistic assessment in partnership with clinical operations that will need to be revisited as the full RBQM program is defined. However, CDM can independently design data quality KRIs, focused on the health of the database, query aging, rate of missing critical data, or eCOA compliance.

ACTION

Define a set of data quality KRIs. Work with the study team to define a set of KRIs and QTLs specific to data quality. This allows CDM to flag when data-specific risks require cross-functional attention.

Phase 2: Implement the workbench

The workbench provides the foundational infrastructure to action RBDM during the study conduct phase. In this phase, CDM begins to move beyond the process-heavy preparation of Phase 1 and starts utilizing the workbench to execute the Classify, Automate, Orchestrate pillars (Figure 2).

Configure criticality logic

Classification is the newest capability area for the workbench. Initially, critical data will be identified and classified outside the system, as discussed in Phase 1. The workbench can then be programmed to trigger action on these data points when certain conditions are met. However, as we will see in the next section, “Optimize,” integrated data classification is on the horizon and will enable next-level automation and orchestration without programming intervention.

Automate high-volume tasks

The automation of repetitive, high-volume tasks is often where teams realize the most immediate impact from workbench implementation. The following figures estimate the automation coverage available to CDM teams today, based on Veeva implementation data. These capabilities are expected to expand significantly as AI and system connectivity continue to advance.

The impact of task automation

These organizations are realizing significant operational savings by using workbenches to automate high-volume, repetitive tasks.

Aggregation and harmonization

A workbench centralizes data from all sources, including third-party providers. By automatically normalizing or harmonizing disparate data into a common format, it eliminates the significant time and effort previously spent on manual transformations.

External data reconciliation

Automation now handles nearly all of vendor reconciliation use cases. When external data is integrated into a centralized environment, most records are reconciled automatically at the point of entry or through programmed logic.

Structural validations

Modern workbenches automate the detection of structural data issues. Through automated change detection, systems immediately alert teams to unexpected modifications or inconsistencies in the data stream without manual oversight.

Automated query management

Up to 50% of traditional manual queries can be eliminated through automated checks. This allows the system to handle routine clean-up while humans focus on critical data interrogation.

Programmatic protocol deviations

By applying automation across EDC and third-party data sources, approximately 30–50% of protocol deviations can be automatically detected and documented in the clinical trial management system (CTMS). This shift ensures that deviations are identified in near real-time rather than weeks later.

Centralize trial oversight

In legacy environments, orchestration relies on disconnected spreadsheets and emails that are often siloed or obsolete. This inefficiency introduces quality risks and obscures the audit trail. The workbench serves as a single source of truth, providing a centralized environment for complete and current trial data and enabling coordination of risk-based actions across functional silos.

With a workbench, CDM teams can facilitate risk-based review through:

Real-time progress tracking

Centralized data enables teams to maintain a holistic view of trial progress and to monitor status via visualizations in near real-time. Key tools include:

- Change detection: Alerts users only when data is new or modified, eliminating redundant reviews of unchanged records.

- Clean patient tracker: Provides a visual, real-time status of data readiness for every participant.

- Query metrics dashboard: Analyzes metrics like query source, age, and impact on data changes so teams can evaluate and optimize cleaning efficacy.

- Study dashboard: Prioritizes daily tasks and assignments to keep the team focused on immediate needs.

Collaboration and task assignment

Teams communicate observations and assign tasks directly within the tool, reducing the need for spreadsheet trackers and emails.

Centralized risk signal routing

Acting as an “air traffic controller,” the workbench routes risk signals to medical review, safety, or clinical operations. When thresholds are crossed, the system automatically assigns tasks and escalates issues.

Integrated audit readiness

The system captures review progress and third-party data approvals as they occur. This eliminates manual trackers and PDFs, ensuring documentation is always current for auditors.

Impact on trial quality

By replacing manual trackers with a single source of truth, the workbench provides the transparency required to meet broader RBQM goals. Leaders can monitor metrics in real-time, allowing the study team to respond instantly when risk thresholds are reached.

A note on change management: Evolving roles and processes

While the workbench provides the technical capacity for RBDM, its success depends on an organizational commitment to evolve legacy roles and entrenched processes. Transitioning from reactive data cleaning to proactive oversight requires a deliberate shift in both mindset and operations.

Guidance for the transition

- Modernize workflows: Move teams away from the fear-based goal of zero errors toward the strategic goal of zero errors that matter, explicitly stopping manual checks on the least-critical data.

- Reskill for next-generation data management: As automation handles rote tasks, data managers must be upskilled in risk analysis, signal detection, and the strategic oversight of the evidence stream.

- Establish cross-functional buy-in: Ensure clinical operations, medical review, and safety stakeholders are aligned on the new data flow, trusting the workbench as the single source of truth for risk-based actions. Cross-functional stakeholders should be trained on the workbench simultaneously.

- Showcase early wins: Use immediate efficiency gains from Phase 2—such as faster ingestion or reduced manual queries—to build momentum for the optimization phase.

Phase 3: Optimize

As both technology and the broader RBQM framework mature, CDM teams move into the final stage of the RBDM evolution: Optimization. While Phase 1 laid the process groundwork and Phase 2 established the technical engine, Phase 3 introduces integrated classification. This enables the workbench to move from a rules-based tool to an intelligent system that understands the intent of the data, driving higher levels of automation and orchestration.

Embed native data intelligence

In this phase, the system recognizes CtQ factors natively. In the workbench, this process begins in a centralized Data Catalog—the evolution of the traditional RACT. By recording study endpoints and objectives directly in the catalog, CDM informs the system of the nature and criticality of every data point, allowing the workbench to distinguish high-stakes data from lower-priority information. Once these CtQ factors are identified, stakeholders can apply the principle of proportionality to ensure vital data receives the highest level of oversight. This framework is designed to eventually scale beyond clinical data into operational data, providing the system with the intent necessary to automate downstream workflows and validations based on relative importance.

Drive intent-based automation

Once data is categorized, the workbench shifts from a passive repository to an active engine capable of automating cleaning and operational tasks across clinical applications in a manner proportional to data criticality. By leveraging the system’s inherent understanding of data types, the workbench can perform cross-source reconciliations—such as aligning AE and ConMed dates—without manual programming. This potential extends further into workflow generation, where risk classifications can be used to automatically generate SDV (source data verification) plans.

Orchestrate autonomous workflows

Leveraging insights from categorization and automation, the workbench functions as a “central nervous system” that breaks down functional silos and orchestrates workflows across the clinical ecosystem. Rather than working in isolation, data managers, CRAs, and medical monitors receive prioritized tasks ensuring the right priorities reach the right people at the right time. This drives cross-functional transparency, as the system can automatically escalate critical issues directly to leadership and trigger coordinated actions across EDC, CTMS, and Safety platforms using KRIs.

FIGURE 3

Synchronizing RBDM and RBQM

How data-specific execution (RBDM) directly aligns with and supports each stage of the broader, cross-functional RBQM framework

V. Measuring success

To sustain momentum and secure further investment in risk-based initiatives, CDM teams must be able to show their value via metrics that reflect both operational efficiency and data integrity.

Following the implementation of the RBDM framework, teams should monitor the following three categories of metrics to quantify impact. Note: We are not suggesting an immediate mandate to track every KPI listed below. To prove the initial value of your risk-based efforts, choose one or two high-impact metrics from each category to establish your baseline.

Efficiency metrics

The most immediate benefit of a workbench-centric RBDM approach is the reduction of traditional bottlenecks. By automating manual tasks and focusing on critical data, teams should see a measurable improvement in:

Time to lock

Reducing the duration from the last patient’s last visit (LPLV) to database lock (DBL). Substantial reductions come from both automating data aggregation and cleaning, and from eliminating the long tail of non-critical data cleaning.

Query efficacy

How many queries are leading to a data change? Low query efficacy means high site burden for low value.

Query resolution cycle time

Tracking how quickly critical queries are addressed compared to non-essential ones ensures that the most vital data is cleaned first.

Quality metrics

In a risk-based model, success is not defined by zero errors, but by the absence of errors that matter. CDM should pivot its reporting to distinguish between minor administrative oversights and systemic risks:

Critical error rate

Focusing specifically on the accuracy of CtQ factors and primary endpoints.

Signal detection latency

Measuring the time elapsed between a KRI/QTL threshold breach and the documented cross-functional response.

Quality tolerance conformance

Tracking the percentage of trial milestones that stayed within established parameters.

Resource metrics

RBDM fundamentally changes how a data manager spends their day. These metrics capture the “promotion” of the data manager from a reactive cleaner to a proactive strategist:

FTE allocation shift

Quantifying the reduction in hours spent on manual movement of files or generation of reports, and the subsequent increase in hours spent on cross-functional orchestration and risk analysis.

Review proportionality

Measuring the ratio of effort spent on Tier 1 (critical) data versus Tier 2 (non-critical) data.

Automation coverage

Tracking the percentage of data checks that are performed automatically within the workbench versus those requiring manual intervention.

Demonstrating ROI

By tracking these metrics, CDM leaders can provide clear evidence that RBDM is not just a theory or a compliance exercise, but a significant driver of business results. By focusing on what matters most, CDM can deliver higher-quality results in less time, effectively turning the data management function into a competitive advantage.

VI. Conclusion: RBDM as a competitive advantage

The transition to RBDM is no longer a theoretical debate about the future; it is an operational necessity. As trial designs become increasingly complex and data volumes continue to climb, the legacy check-everything model is reaching its breaking point.

The risk of the status quo

Choosing to maintain traditional EDC-centric processes carries a cost that extends beyond simple inefficiency. CDM teams that remain in implementation paralysis face three significant risks:

RISK #1

Compromised data quality

In an era of massive data variety, manual review is statistically more likely to miss systemic signals while becoming bogged down in non-critical noise.

RISK #2

Talent burnout

Forcing highly skilled data managers to perform rote, manual reconciliation across spreadsheets leads to attrition and stifles the professional growth required for modern drug development. Research from Veeva found that, of the anticipated consequences of trial inefficiencies, burnout is a top concern for data managers (65%), second only to data quality issues (71%).

RISK #3

Operational irrelevance

As trials shift toward complex, multi-modal designs, teams tethered to legacy EDC-centric workflows will become the primary bottleneck, unable to support the speed and agility the business requires.

The next generation of clinical data management

The alternative is a future where CDM is no longer a reactive data processing center, but a proactive strategic partner and the high-speed engine of clinical development. By adopting a workbench-centric architecture and activating the pillars of classification, automation, and orchestration, CDM provides the high-fidelity data that powers the entire clinical ecosystem.

The time to lead is now

The most important takeaway for CDM leaders is this: Do not wait. While the broader enterprise-wide RBQM framework may still be evolving, the tools and methodologies for RBDM are available today. The clinical data workbench provides the foundation you need to secure your critical data and lead the transition to risk-based efficiency.

By taking action now, CDM can break the cycle of paralysis and demonstrate what is possible when data management is driven by risk proportionally, enabled by technology, and focused on quality. The workbench is ready. The industry is waiting. The time to lead is now.